@amadeodum , you are right. You should use the ord function but only in the sum condition. The t-t’ in the variable will act as a lead/lag operator.

Try the below and let me know if that works as you expect or not

sume(l,u)|ord(u)<=FermTime(l), Y(o,l,t-ord(u)] <= 1

@mohansx It works!

Thank you for your help :)

@amadeodum , I assume you want this constraint to apply for all (o, t) and Delta(l) is a parameter? If yes, then the index domain of your constraint must be

(o,t)

Declare a second index t’ in your set of time periods as described here: https://how-to.aimms.com/Articles/184/184-use-multiple-indices-for-set.html

Now your constraint definition can be

sumc(l, t’)|(t’ <= Delta(l)), Y(o, l, t - t’)] <= 1

I am assuming that your set L starts from 1 and set T starts from 0.

@mohansx thank you for your answer.

Your assumptions are right, I forgot to mention it.

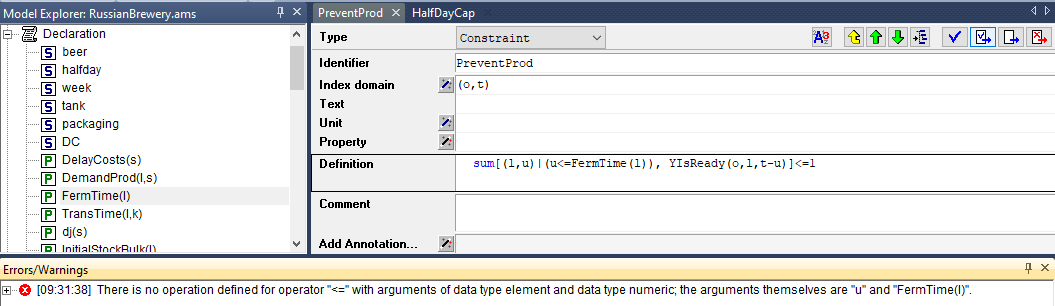

However, it does not work when I implement your constraint in AIMMS.

(I replace t’ by u and Delta(l) by FermTime(l)).

Shouldn’t I write the following code:

sume(l,u)|ord(u)<=FermTime(l), Y(o,l,ord(t)-ord(u)] <= 1Or does it change the meaning of the constraint?

Regards,