We were facing issues with the solve time of this model, especially given the exponential growth of solve time when we solved larger models. Back in January, we started to investigate the cause of the long solve time together with AIMMS. However, the start of this investigation was daunting: no matter what we tried in model reformulation, scaling, different solvers or solver settings, the performance did not significantly improve.

We concluded that if all else failed, we could always solve the individual time periods in the model one-by-one (because in fact those time periods are not connected). To efficiently split the generated matrix in smaller independent submatrices, solve them one-by-one, and merge back into one solution, AIMMS developed the CreateBlockMatrices and SendToModelSelection functionality. And in fact, this turned out to be a great success in improving the performance of this model.

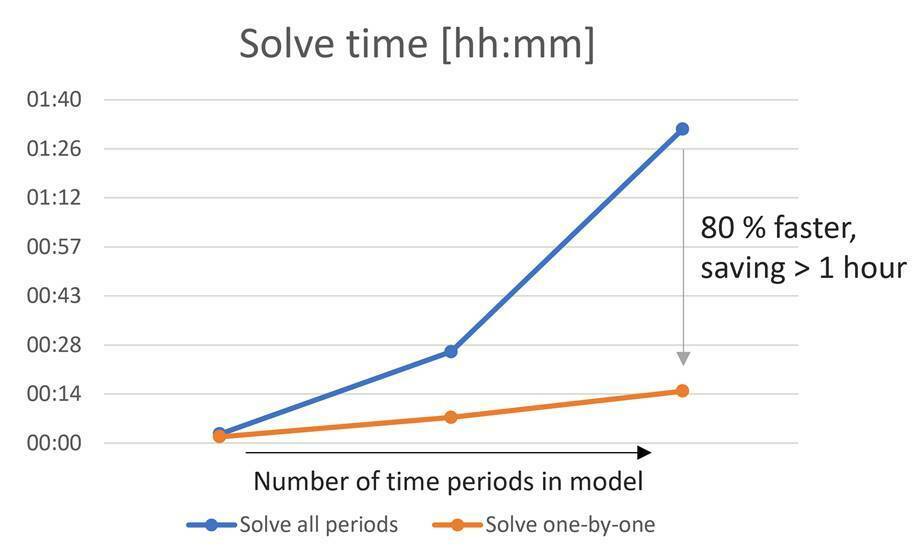

Following the release of the new AIMMS functionalities, we implemented the solution method in our application and the graph below shows how the solve time of this model has drastically decreased with the newly implemented functionalities. For our larger models, it drops from ~1:30h to ~15 minutes.

In fact, the new approach would even allow us to solve the individual time periods in parallel on AIMMS PRO, but we have not yet implemented that. So, there is opportunity for further improvement still.

On behalf of our users, a big thank you to AIMMS for your support on this. As a result of this new functionality, our users can now run their analyses quicker, greatly improving their daily workflow. Our users are really pleased with the speed at which they can now run their models, and I wanted to extend their compliments to AIMMS as well.